Posted by Anita on February 24th, 2008 — in milestones, screenshots

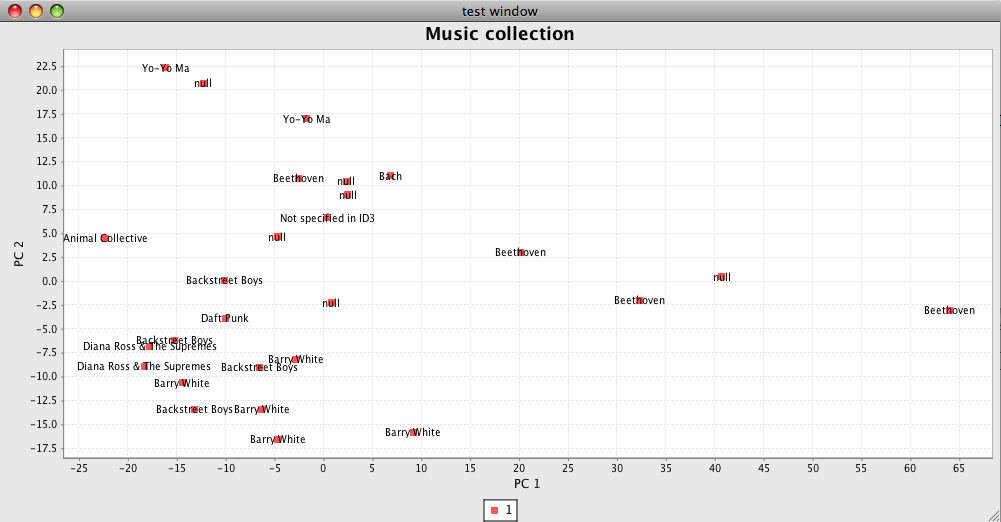

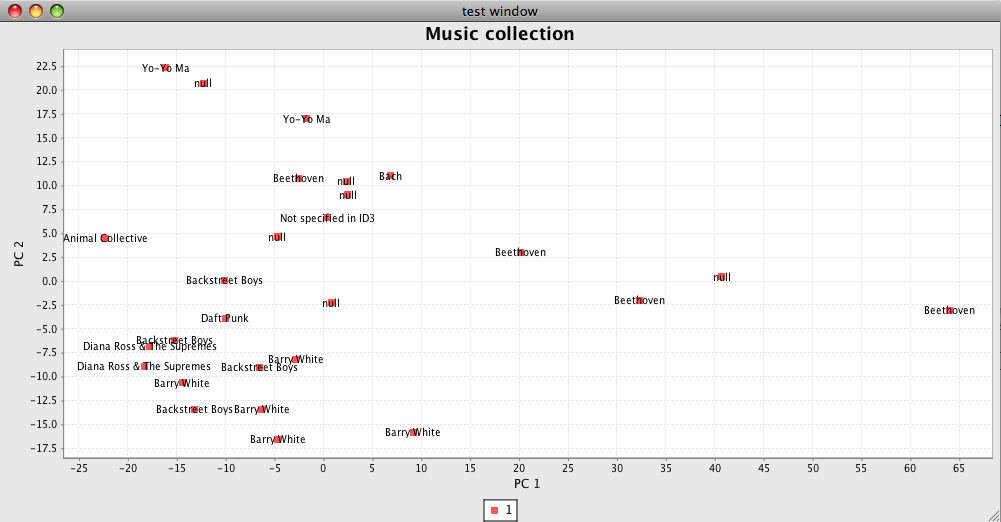

Ok, this is pretty ugly… but I’m very excited to see it. It is the first test image coming out of the PCA engine I’ve built. It’s mapping test tracks based on timbre values, number of sections, duration, and time signature. JFreeChart was a great recommendation, thanks Paul :)

(The “null”s are Bach.)

I’ll use this kind of interface as I clean up the code and test out new features. Then, on to 3-D!

No Comments »

Posted by Anita on February 22nd, 2008 — in feedback

What’s a good Java package for scatter plots? I just need to plot points with labels, no interactivity.

1 Comment »

Posted by Anita on February 22nd, 2008 — in milestones

I’ve got a working PCA engine, and a few simple features implemented. I also have acquired a large set of MP3s with accompanying Echo Nest data, to create test libraries. So now I have code that takes a small test library, computes/retrieves a handful of audio features for that library, and remaps the tracks onto a smaller-dimensional space.

Now I’m working on a way to show the PCA results visually, since it’s much harder to test without that. I’m also developing new Feature classes focusing on general timbral descriptions of a track.

No Comments »

Posted by Anita on February 4th, 2008 — in audio features, definitions, feedback

I’m getting more into my coding, and now am trying to answer the question, “What is a feature?” Specifically, what are the features that I can glean from the audio to get a meaningful distinction between one song and the next, and what is the general description of this thing I call a “feature”.

My project is not intended to be focused on figuring out or implementing these features; I am focused on bringing them together into a navigable representation. But in the design of the Feature class I’m finding myself wondering:

- Do features operate at the song level, or at the section level? I should have the ability to deal with either type, but then, I am sometimes mapping song sections, and sometimes whole songs. What do I show to the user in the interface if I’m only really mapping a section of a song?

- Should I try to choose one section to be representative of the piece as a whole, and just do my analysis on that section?

- What are the must-have features people have already written code for, that can be easily adapted and plugged in to my engine?

- What kind of rhythm-based features can I pull out? (I mention this because I am sorely lacking in the rhythm arena.)

I will start with features like this (for each track):

- number of sections

- number of types of sections (counted by timbre type)

- number of types of sections (counted by pitch pattern type)

- mode of timbres

- mean level of specific timbre coefficients (coefficients shown visually at the bottom of this page)

- tempo

- mean loudness (or max, maybe)

- confidence level of autotag assignment with tag1, tag2, tag3, etc… (multiple features here)

- frequency of appearance of tag assignment with tagA, tagB, tagC, etc… (multiple features here)

- time signature

- time signature stability

- track duration

(Note that, right now, I am not talking about similarity measures for pairs of songs, but rather quantifiable measures for one song at a time. I’ll deal with similarity later.)

4 Comments »

Posted by Anita on January 31st, 2008 — in organization, papers, background

Today I set up SVN with Trac on scripts.mit.edu, and also Eclipse for Java development on my Mac. I just have to point the Eclipse SVN plugin at my SVN repository now… but I’m going to wait on that until I have some code in there. I went through a tutorial of Eclipse and it looks awesome — I don’t know how I haven’t used it before now.

Also setup TeX and got some TeX templates from friends to start with. I am going to create an outline for my thesis, to which I can continually enter notes as I am reading through all these papers and books.

Also picked up Barry Schwartz’s “The Paradox of Choice: Why more is less” and Kusek/Leonhard’s “The Future of Music”.

No Comments »

Posted by Anita on January 28th, 2008 — in organization, papers, background

I picked up David Jenning’s “Net, Blogs and Rock’n’Roll”, and am working through it. Of most interest to me so far are Alan Lomax’s “cantometrics” work back in the 60s and MusicIP now (including MyDJ). I’m wondering how successful MusicIP has been… What’s it lacking and what do they do well?

Also been working on setting up LaTeX + GUI + citation handling software… argh! … and some revision control for this project. Right now I think I’ll go with BibDesk and TeXShop for writing with LaTeX, and subversion for RCS. Any advice welcome, as always.

1 Comment »

Posted by Anita on January 21st, 2008 — in Uncategorized

Here is the (not quite complete) browser for last.fm users’ listening prefs and friends, that I wrote for Tod’s class last term. It shows the focal user’s friends, scaled in size by musical taste compatibility, along with the top tags for the top artists listened to by the focal user. The goal of this was to focus on what types of music each user listens to, instead of the particular artists, and to look at last.fm as a social cloud of music listeners. It’s my first project in Flash, and uses the flare visualization toolkit.

(Click on any last.fm username to move about in the clouds. Note: it’s a little slow; lots of downloading of RSS feeds going on in the background here.)

No Comments »

Posted by Anita on January 21st, 2008 — in papers, background

Argh, getting back to normalcy now, much later than I’d anticipated. I’ve been in Austin and Taiwan, then back to Boston, over the last couple of weeks, and although I’ve been busy, it hasn’t been with thesis-related stuff specifically.

But now, I’m back to work for good, ready to crank hard and consistently until I finish in May.

Today I met Takuya Fujishima, of Yamaha, and talked with him about soundsieve and my thesis project. He’s a very interesting guy, completely on top of the work going on in MIR — he specifically mentioned Paul (Lamere) and Elias Pampalk when I was talking to him about my project. He also suggested I look into the work of these Japanese researchers:

This is certainly a good start in getting back to reading relevant papers. I’m definitely curious to know if there are must-read papers/people that I should take a look at. I’m interested in these topics specifically:

- interfaces for music browsing and recommendation

- multidimensional analysis

- audio features that are commonly used to discriminate between different styles/genres

- projects that combine audio analysis with contextual descriptors

- commentary on what makes an “appropriate” music database (how to have it as unbiased as possible?)

- interface design for 3D spaces

I found these lists of MIR-related papers/dissertations, and will look through them:

As far as actual work is concerned, I am focusing for the next few days on truly defining the intended scope of my project and creating a plan for the programming I have to do. More on that soon.

1 Comment »

Posted by Anita on December 2nd, 2007 — in Uncategorized

I’m in a bit of a holding pattern on my thesis for the next 2-3 weeks, because I am finishing up the semester, and my classes have become more busy. This weekend I am off to Vancouver/Whistler for the “Music, Brain, and Cognition” Workshop at NIPS 2007. I return with a problem set due and a final exam for my signal processing class. And the following week I spend on my project for Tod’s class, which will be a (simple) visualization of a user’s music preferences based off of last.fm data feeds.

I’ll finally be free from school work on December 19th, so I hope to make some headway at that point.

No Comments »

Posted by Anita on December 2nd, 2007 — in milestones

I got this email on November 29:

Anita ~ MASCOM has reviewed the comments by your readers as well as the comments provided as a result of your presentation on Crit day — congratulations your proposal is considered approved.

1 Comment »