Posted by Anita on April 20th, 2008 — in audio features, contextual features, screenshots

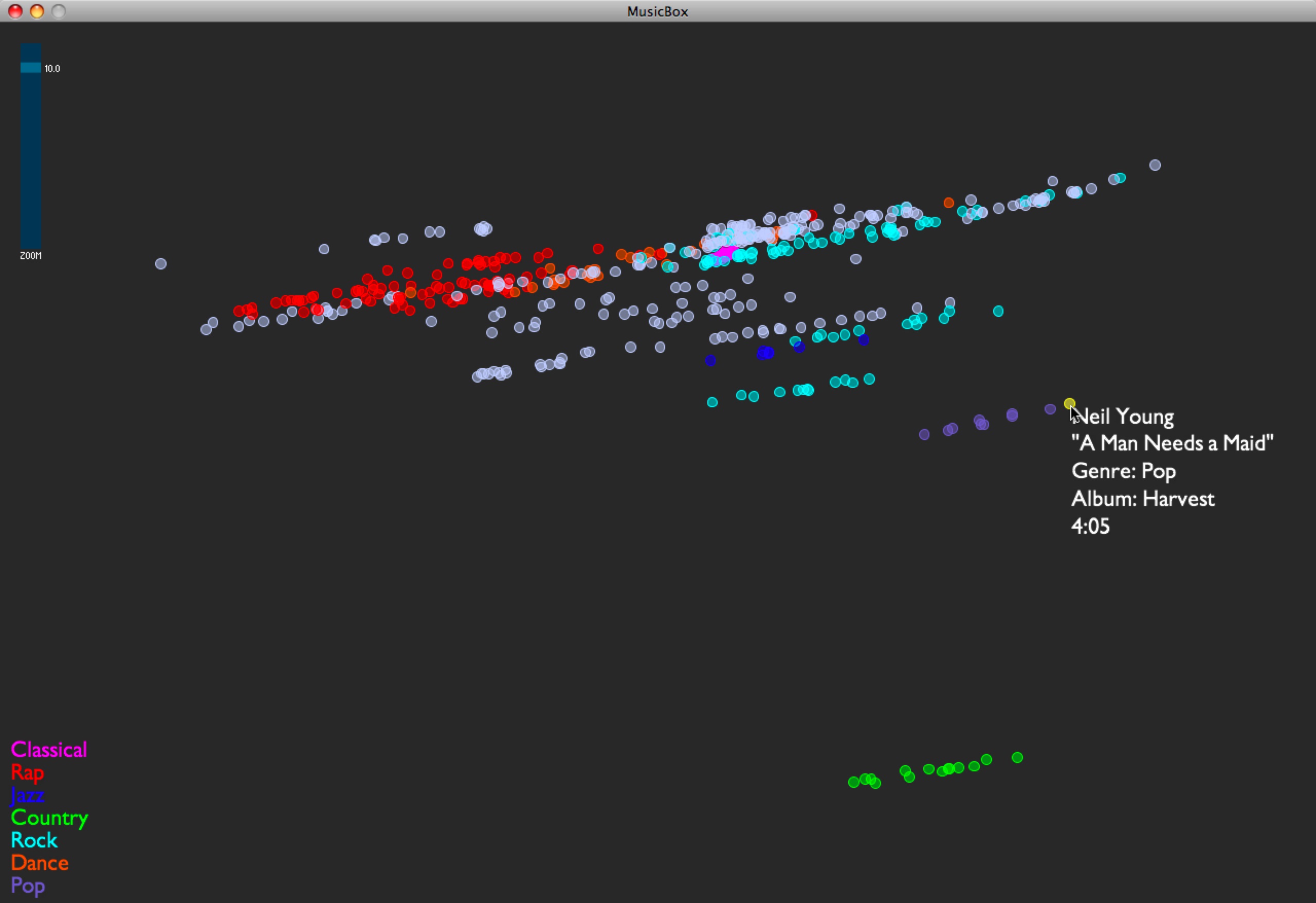

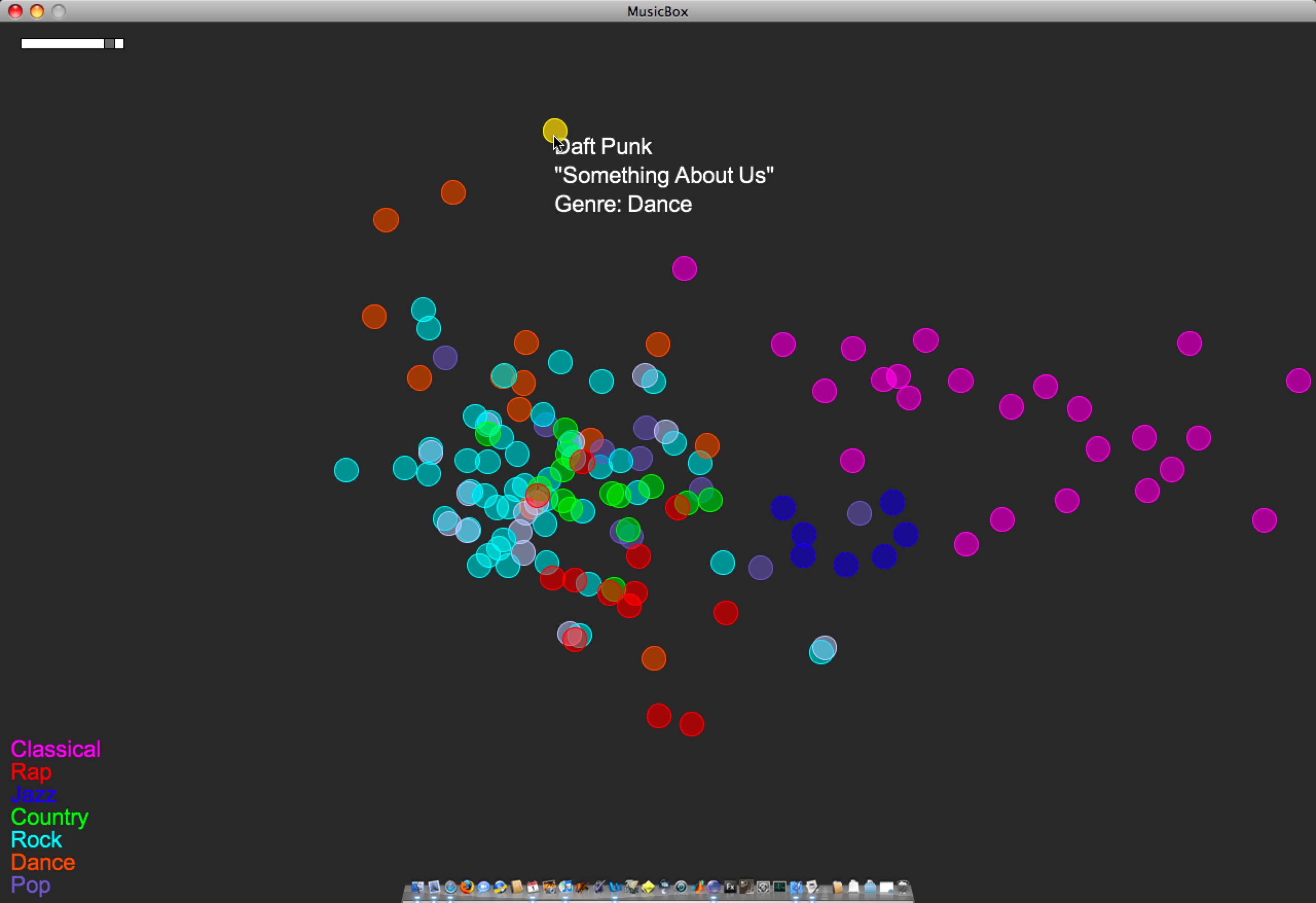

I implemented the first set of contextual data for my test set of music. I’m grabbing moods off of allmusic.com. These are descriptors like “spooky”, “lively”, or “epic”, which are usually listed per album. I’m incorporating these features into the PCA by treating each descriptor as a separate feature, giving each song with the given descriptor a “1”, and all others a “0”. These contextual data are being added into the model that already has audio-based data.

As expected, this stratifies the whole data set, and is an interesting way of separating out the albums… those albums with the same sets of mood tags appear in a stripe across the space, and their position in the stripe comes from the audio features. If I had to guess what the audio feature separation means here, I’d say it’s distributing music on a spectrum of edgier, more bursty sounds (on the left in this visual representation) to smoother, softer sounds (on the right). I am guessing this purely from inspection.

Here are some examples:

- I see a stripe of this album: Backstreet Boys — “Black & Blue”. It moves from “Shining Star” (click to listen; you need Windows Media Player) on the edgy side, to “How Did I Fall In Love With You“ (click to listen IF YOU DARE; you need Windows Media Player) on the smooth side.

- I see a stripe of this album: The Beatles — “Sgt. Pepper’s Lonely Hearts Club Band”. It moves from “Getting Better” on the edgy side, to “Lovely Rita” in the middle, to “A Day In the Life” on the smooth side.

- I see a stripe of this album: 10,000 Maniacs — “Our Time in Eden”. It moves from “Candy Everyone Wants“ on the edgy side, to “How You’ve Grown“ on the smooth side.

Also, Busta Rhymes’ smoothest piece in the test set, “Hot Fudge”, is way less smooth than the Beatles’ edgiest piece.

No Comments »

Posted by Anita on April 15th, 2008 — in audio features, feedback

My newest problem is one that I knew I’d come across eventually: What do I do with songs that have missing data? This most recently came up when I was adapting Thomas Lidy’s rhythm feature code… It couldn’t open some of the MP3s in my test set, so I have no rhythm feature data for those songs. Lacking a better idea, I just gave them the mean values of all the other songs. But this doesn’t seem right… I can’t really give them any value. But if I don’t give them any values, the PCA can’t process these tracks anymore; I can’t put them in the space at all.

So, not sure what to do about these songs. Anyone have any suggestions?

3 Comments »

Posted by Anita on April 15th, 2008 — in audio features, screenshots

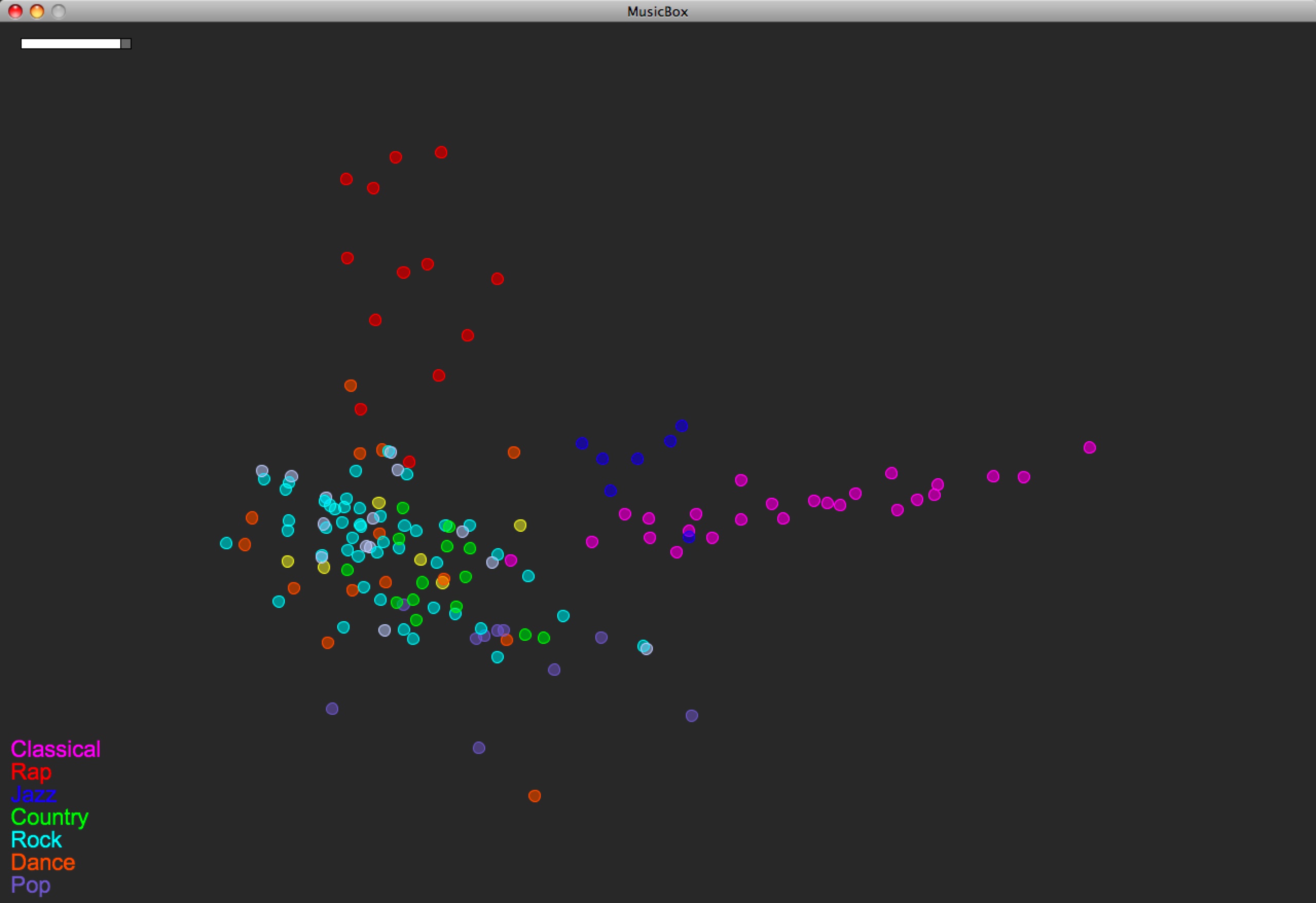

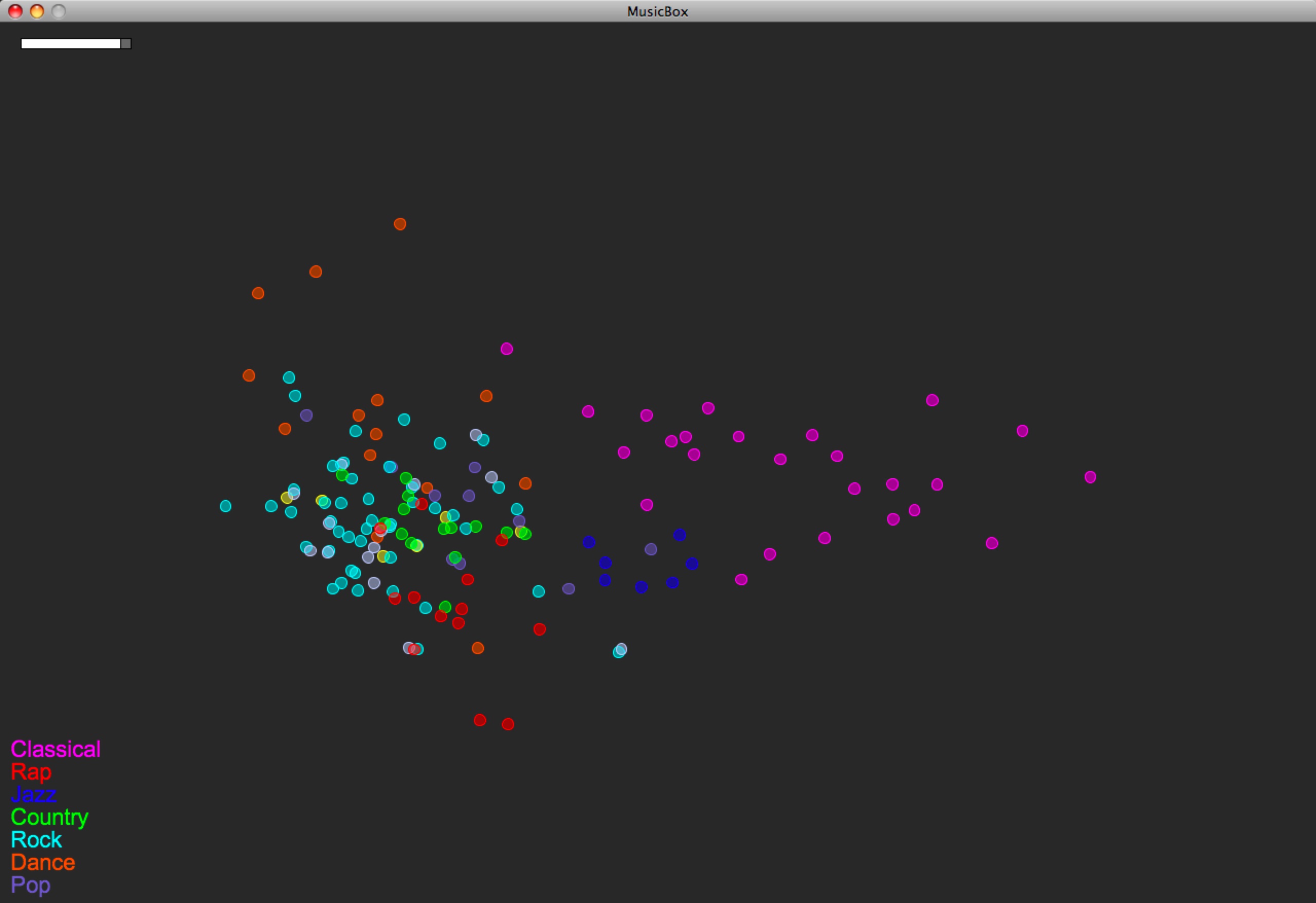

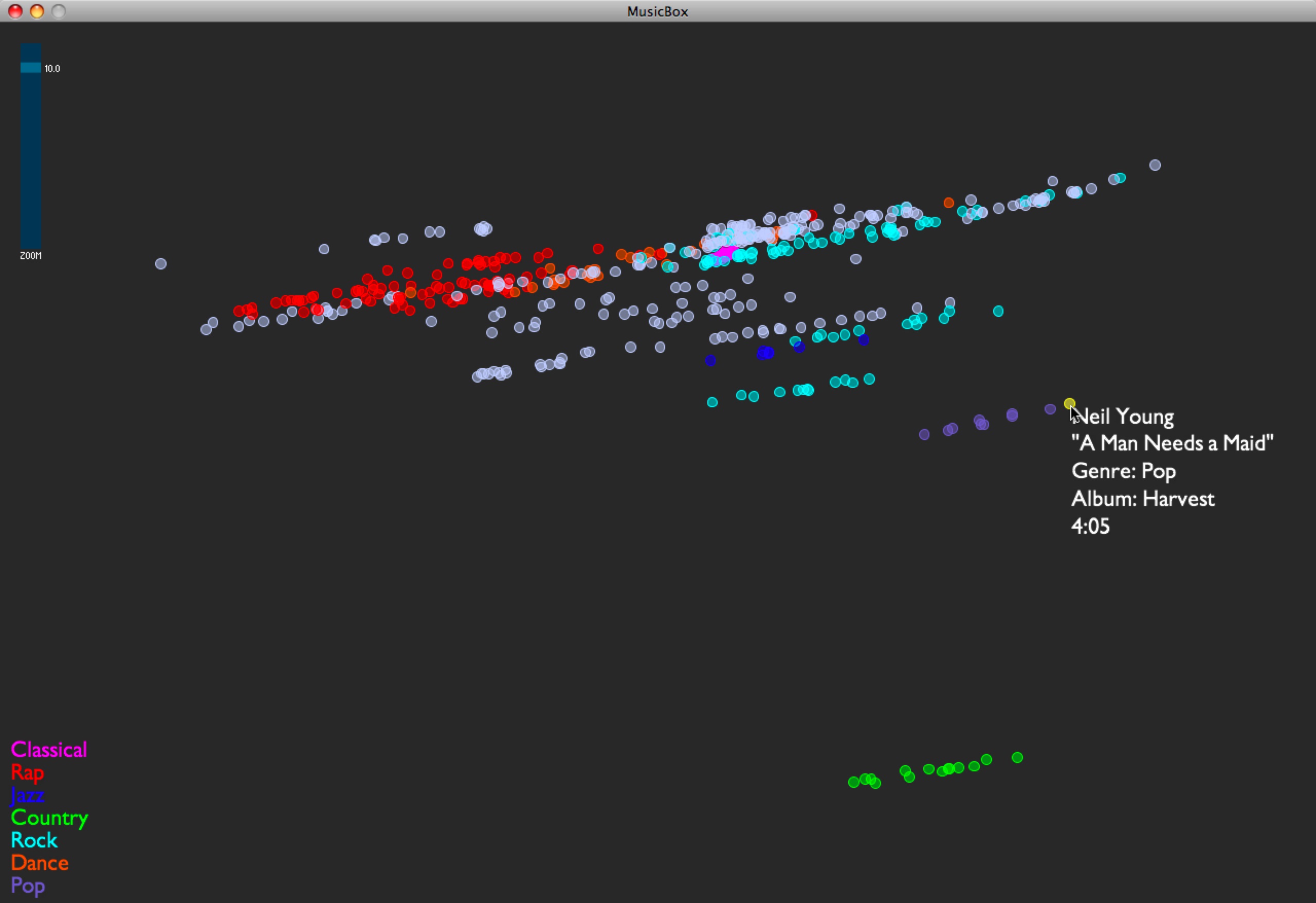

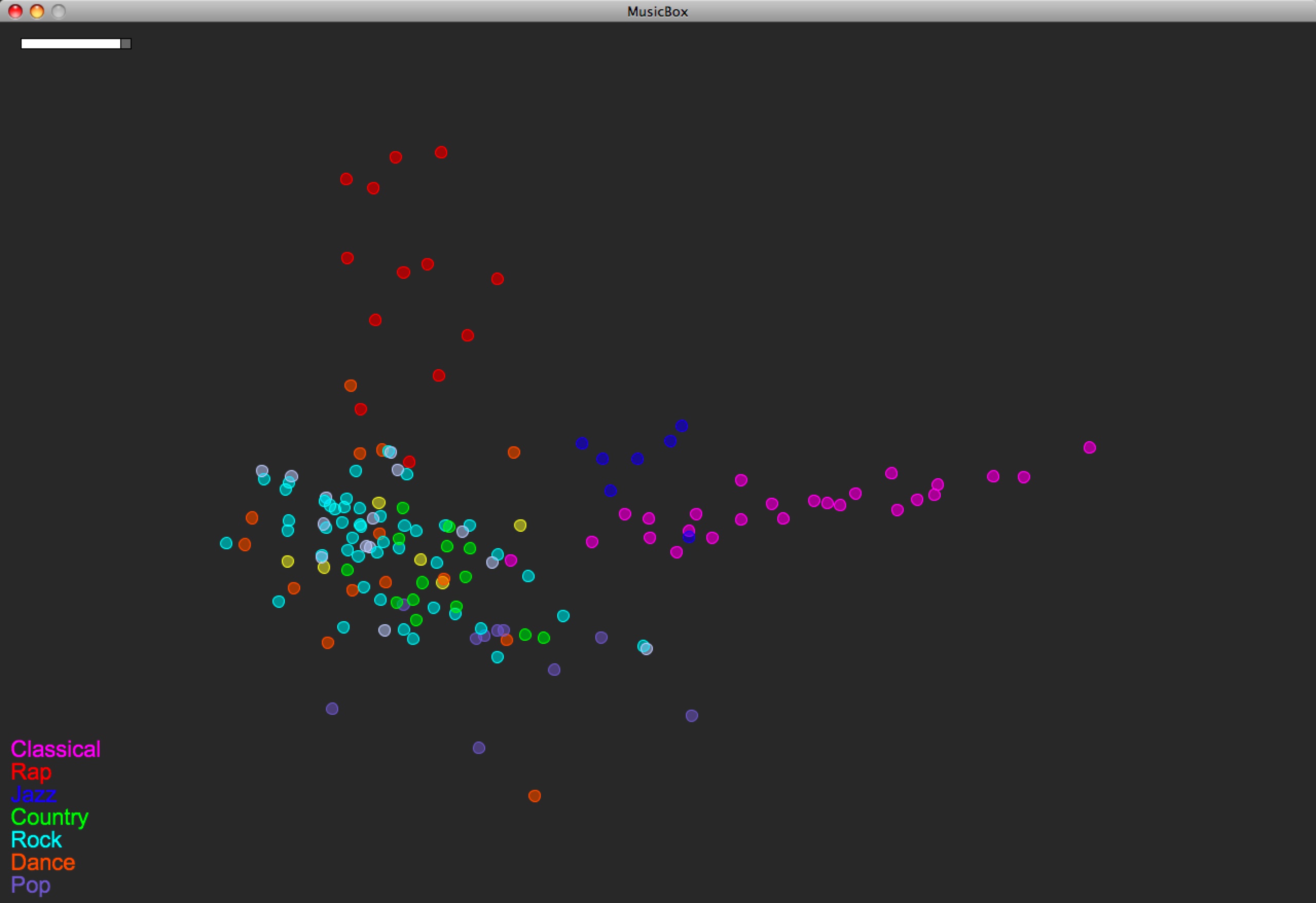

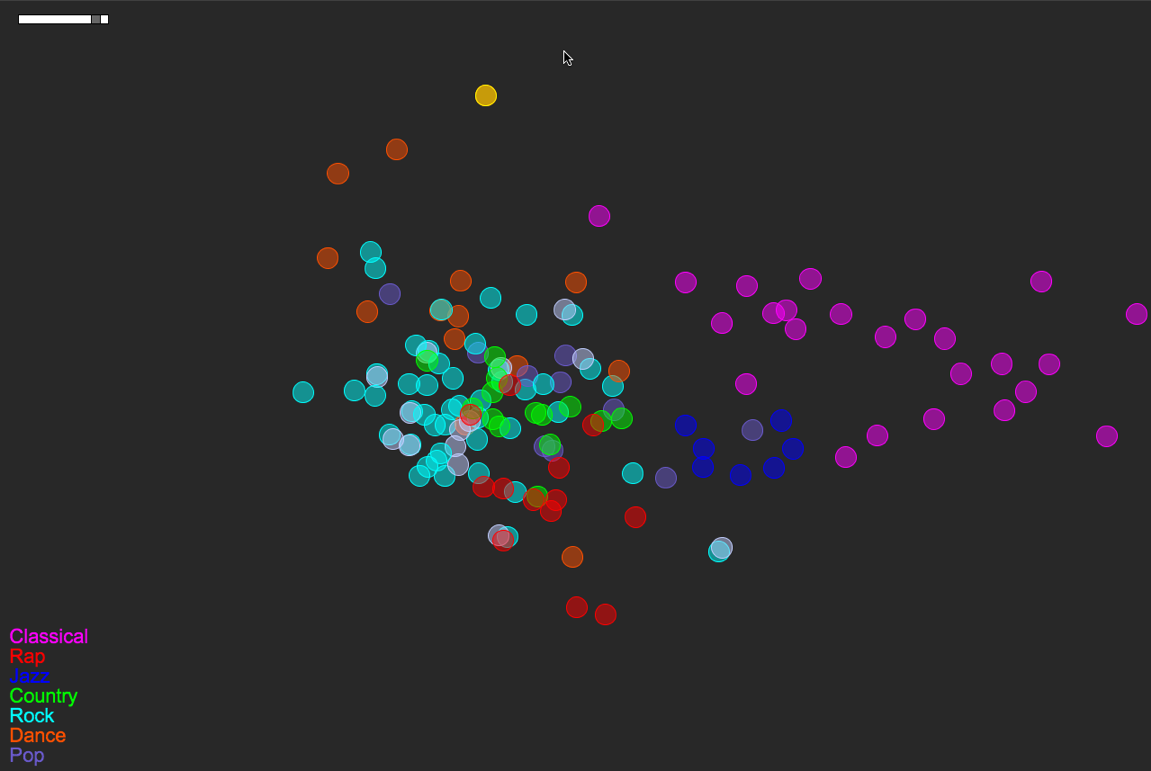

I was able to add some rhythm features to the model. I’m using Thomas Lidy and Andreas Rauber’s Rhythm Histograms (RH), summarizing each histogram (one histogram per song) with four features: mean, median, standard deviation, and (at Thomas’s recommendation) kurtosis. Here’s how the map of the same music library looks now:

You can compare with the last version, before RH features were used:

Unfortunately the Rhythm Histogram code couldn’t open my classical music, so you have to disregard that data (to which I gave the mean value of the rest of the songs’ RH data).

So the main thing that happened here is that the rap music popped out. And perhaps a wee bit the jazz.

1 Comment »

Posted by Anita on April 13th, 2008 — in milestones, screenshots

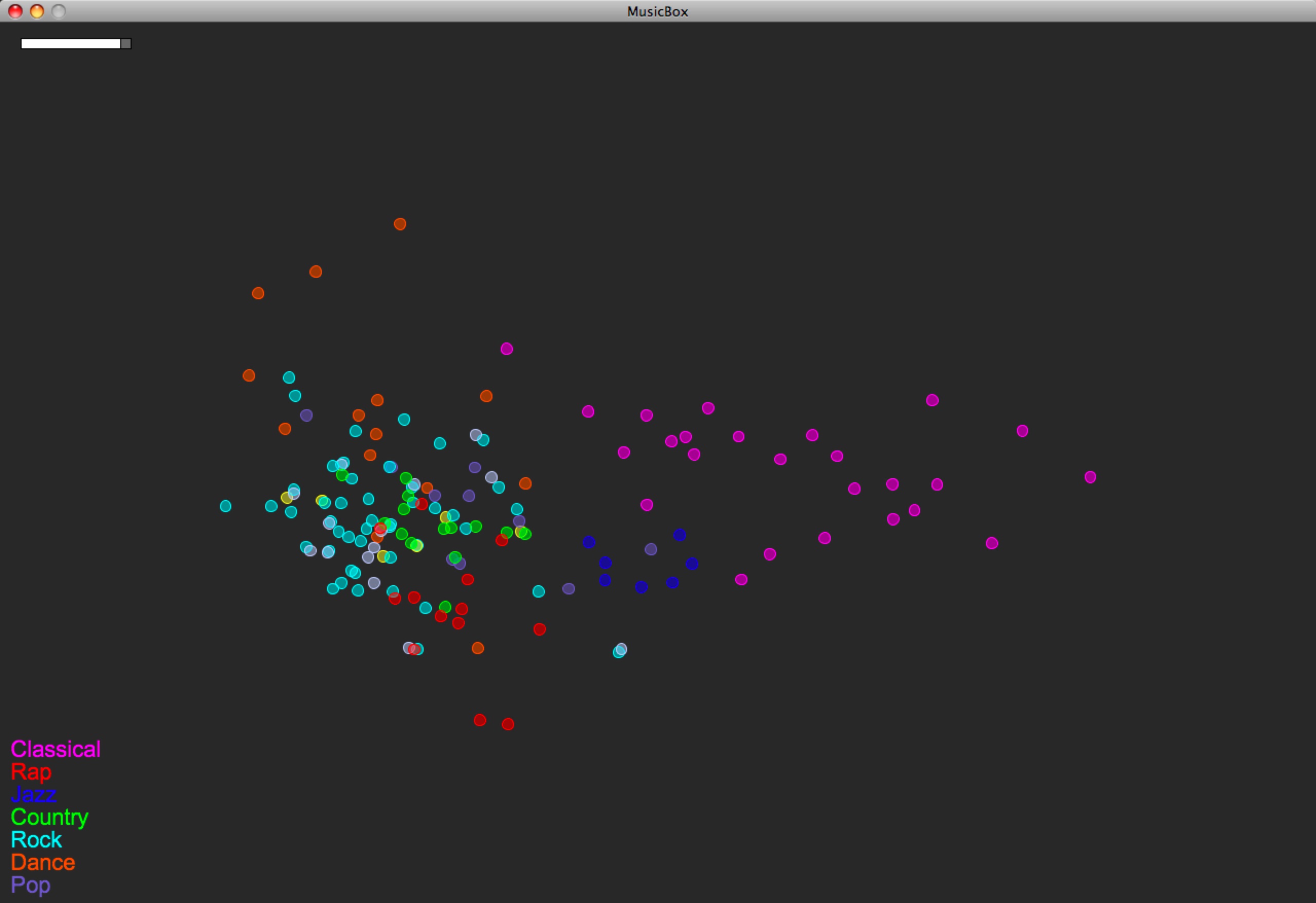

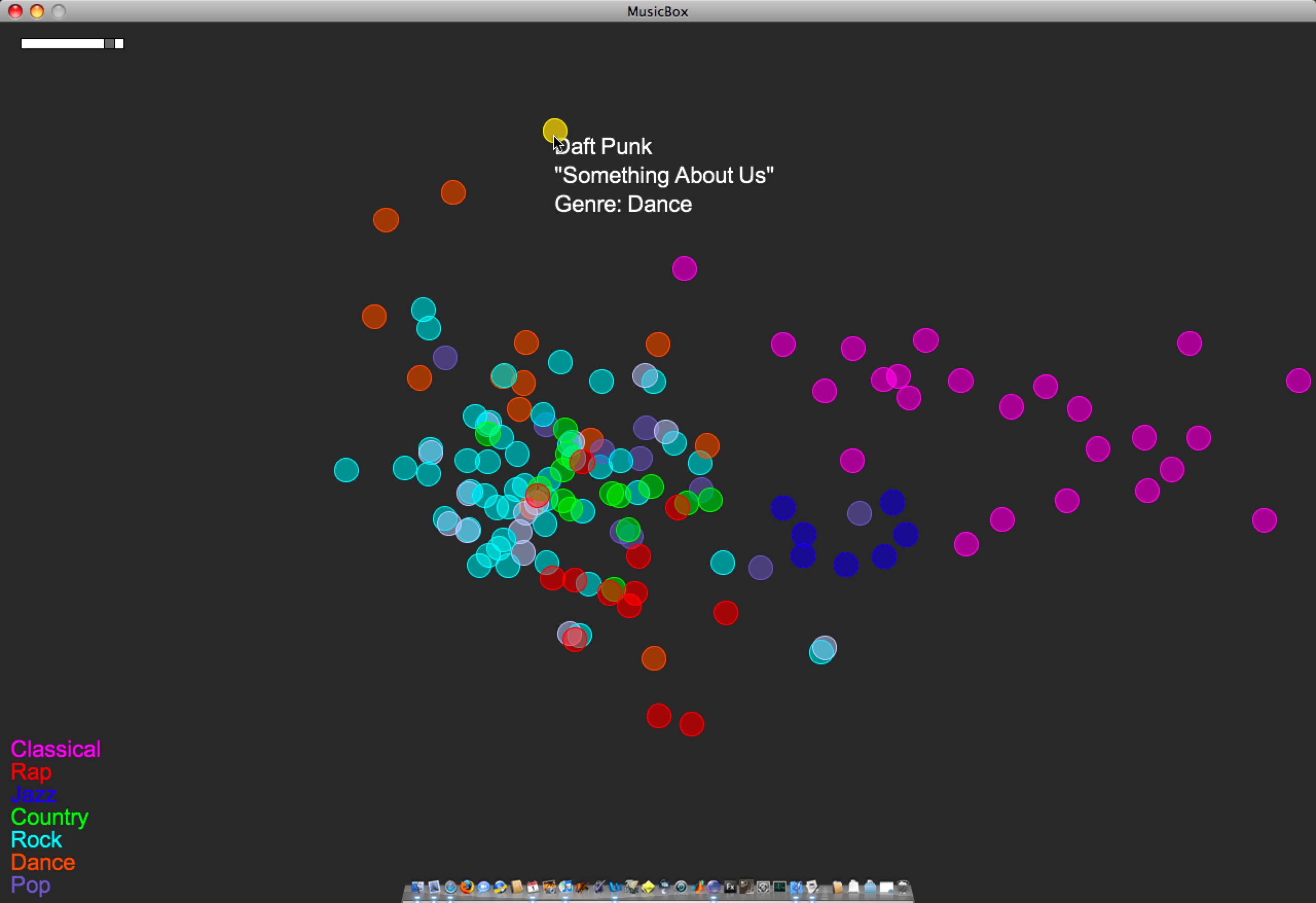

Now posting the equivalent of the last post‘s video, except in 2D, built with Processing. Just click the image below to load the 1.5 MB QuickTime video (larger version here — 12 MB). This is the version I demo-ed for sponsors April 1st and 2nd.

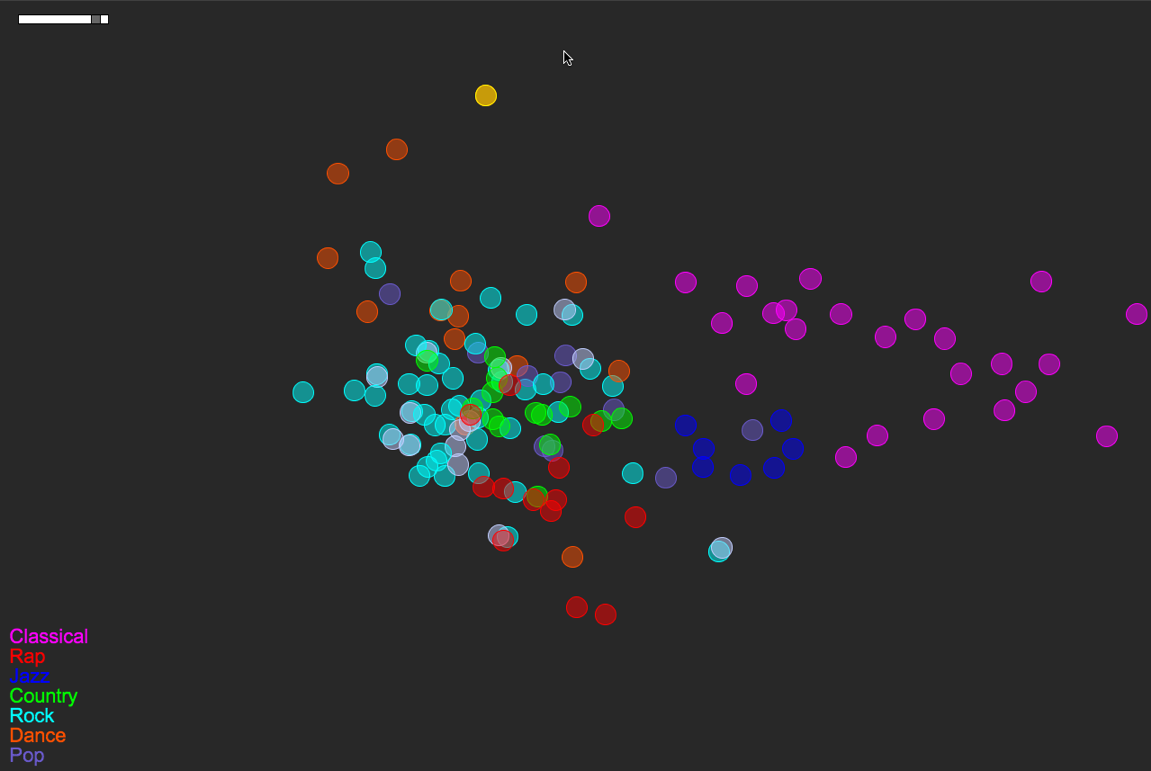

Songs/circles are still located based on a PCA of timbre, tempo, duration, number of distinct sections, and time signature stability. The circles’ colors are mapped straight from genre tag (see the legend at bottom-left).

Here’s a more detailed look at the layout of this small music library:

A few things to note:

- Some of the genre colorings are fairly well separated/clustered based on the small set of audio features I’m using. Note how classical music sits almost on its own. Jazz sits neatly nearby, between Classical and everything else. Rock is a nice mess that overlaps with Pop, Country, Rap, and Dance. These are relationships that make sense if you think about how loose or tight each genre label is.

- In a few instances, there are pairs of songs that are actually the same track at different bit rates (I had duplicates-with-different-bit-rates in the test library). One example of this is the light-blue-and-white pair just south of Jazz. This is The Flaming Lips’ “The Observer”, at bit rates 256 and 192. (One of those has a bad genre tag as well — That’s why it’s white.) Looking at The Flaming Lips’ “Zaireeka” album (not pictured) was also very cool, since you can see how the complementary stereo tracks are either very similar or very different.

- I listened to the leftmost Country song; it is Willie Nelson’s “Still is still moving to me”. According to this representation of the music library, this is the “country song most like rock”. You can certainly argue for or against that, but it’s still interesting to be able to start making characterizations like this.

- This is a small library (~150 songs). As it gets bigger, and as the feature set grows (i.e. when I implement new features), hopefully these patterns will just become more meaningful… We’ll see.

3 Comments »