Posted by Anita on October 28th, 2008 — in videos

I finally had the time (and the computer!) to put together a demo video of MusicBox. This video, more than the others on this site, shows a wide range of features, all from the most recent version of the software. I hope you find it a helpful introduction to my thesis project. [Go to the video: QuickTime | YouTube (Note the HD version!)]

As always, I welcome your questions and feedback!

73 Comments »

Posted by Anita on September 15th, 2008 — in documents, milestones, screenshots

I submitted my written thesis in early August 2008. Rather than continue to hold off on posting it for IP reasons, I’m going to opt for the “open is better” attitude and just post it. I hope that this will encourage people to chat with me about where this research stands, where it’s going, and how they can get involved.

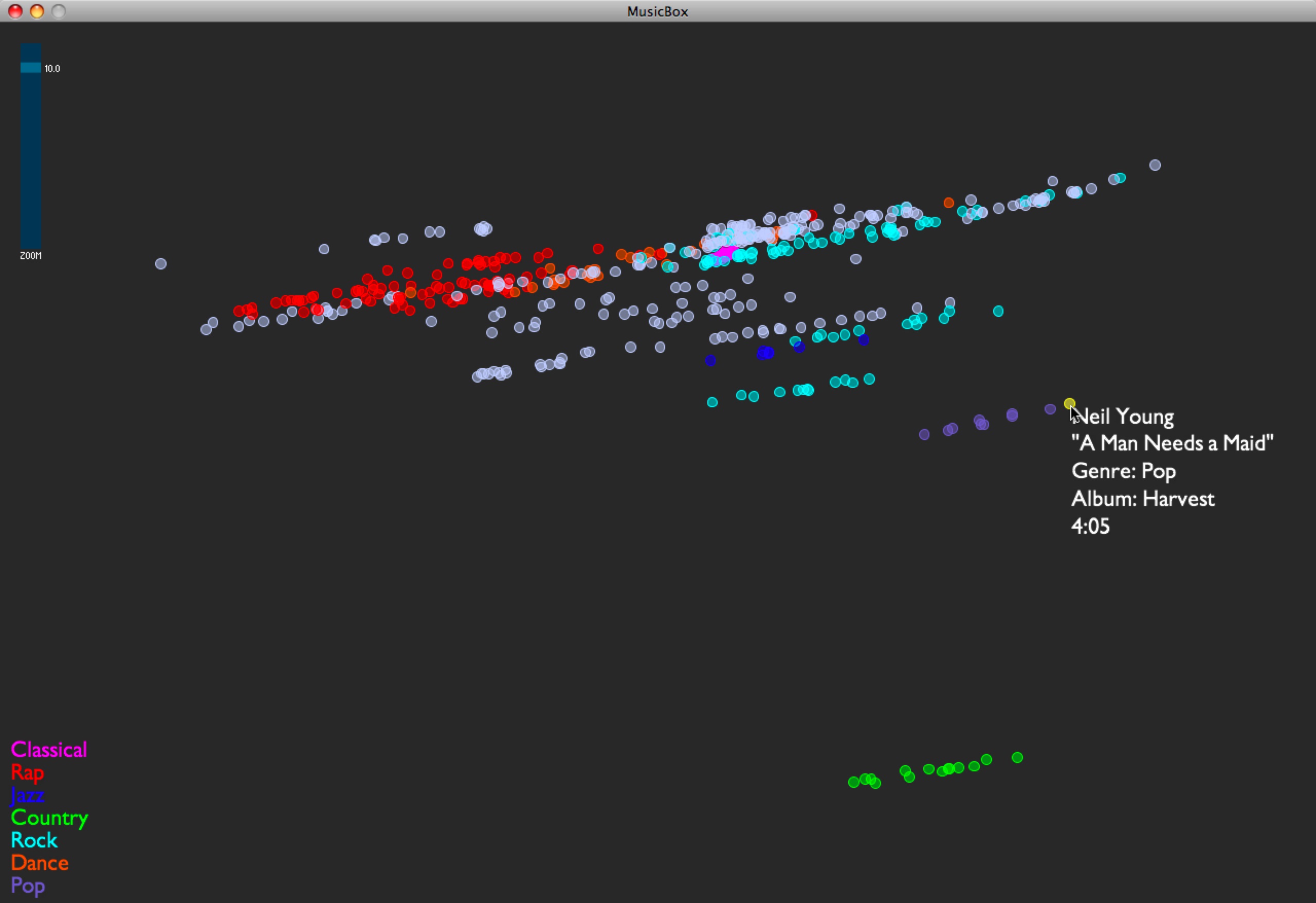

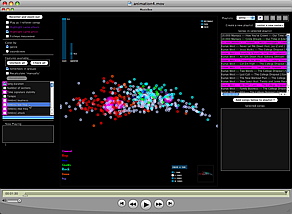

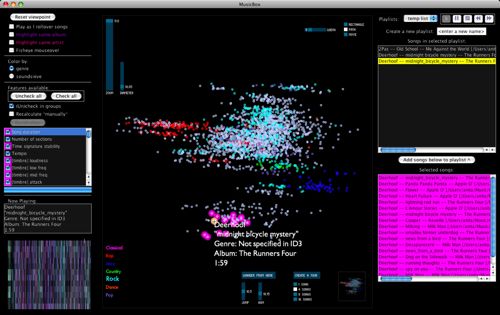

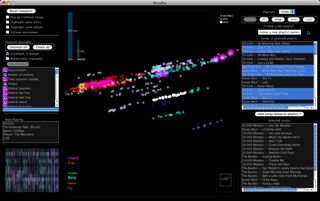

If this is your first visit to this site, I’ll briefly catch you up: This is a blog for my Masters thesis at the MIT Media Lab, on a music browser called MusicBox. In this blog, you can find screenshots and videos of the project, which show the interactive maps that MusicBox creates.

I intend to continue developing MusicBox in the coming year, but will likely not update this blog with the developments, since the project as a thesis is complete. Once I decide where I will post updates, I will add a link here. (The new place will have a more complete set of screenshots and videos.)

If you would like to read my written thesis (10.2 MB, 124 pages), you can download the PDF. Feel free to send me feedback, either by posting a comment here or by emailing me.

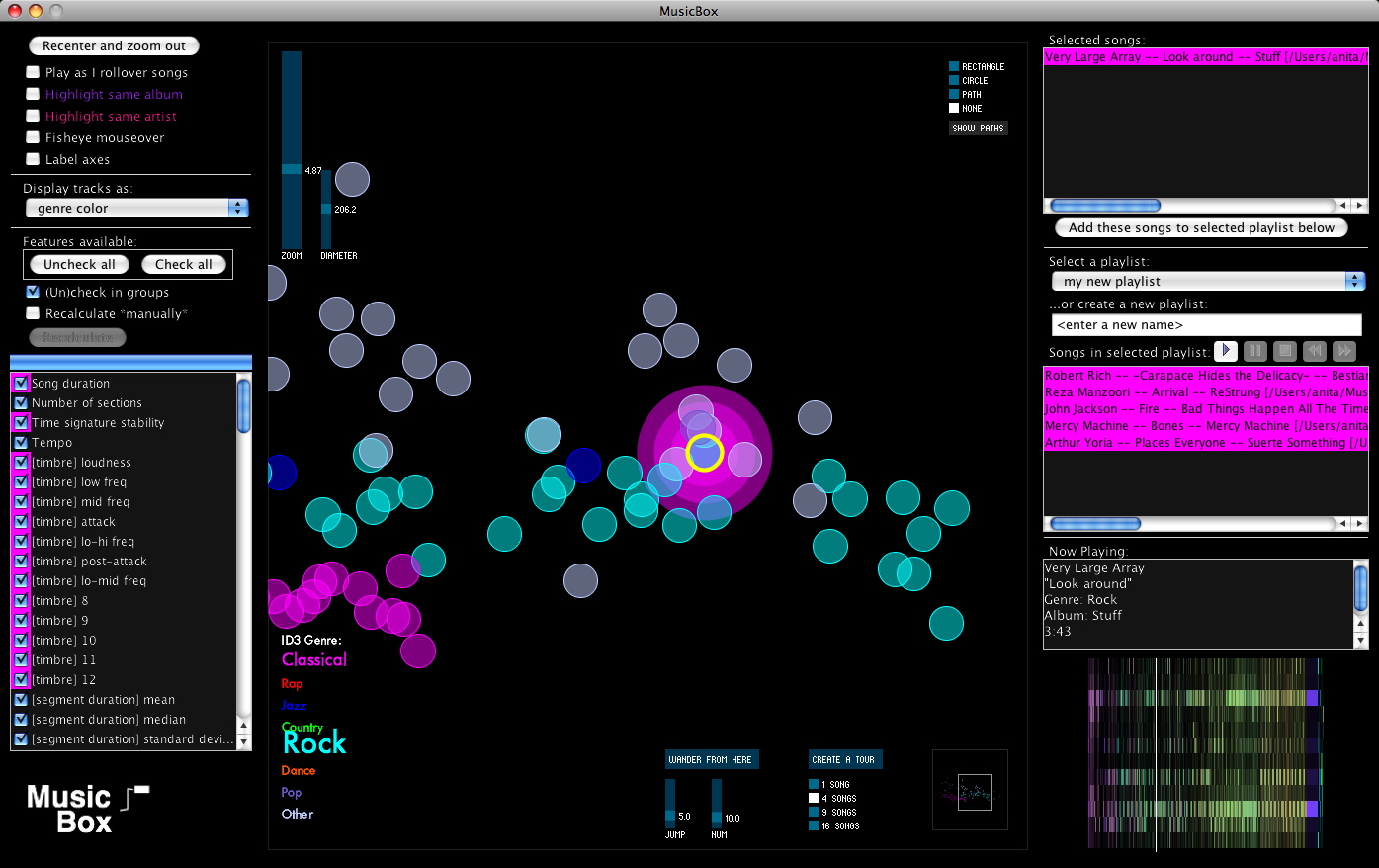

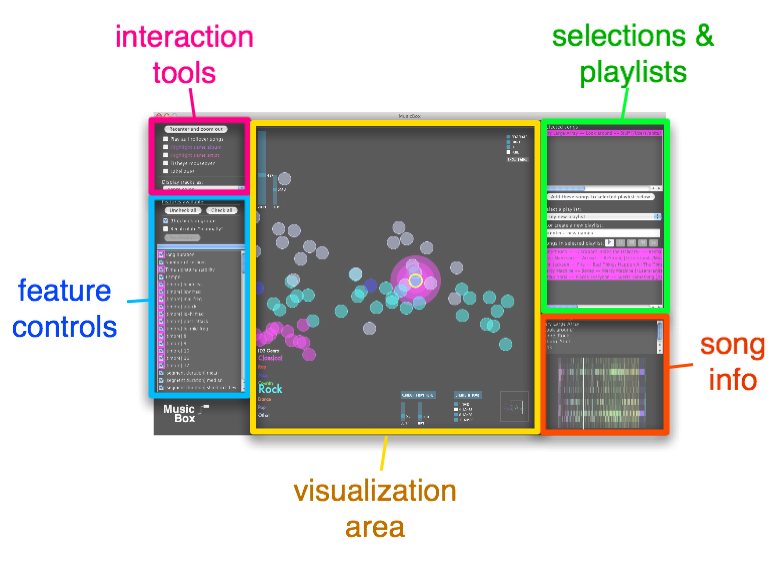

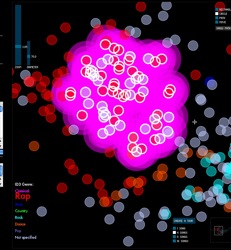

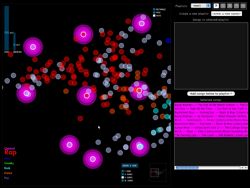

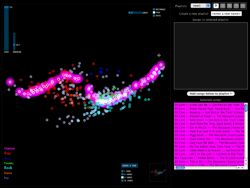

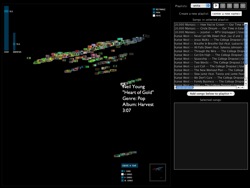

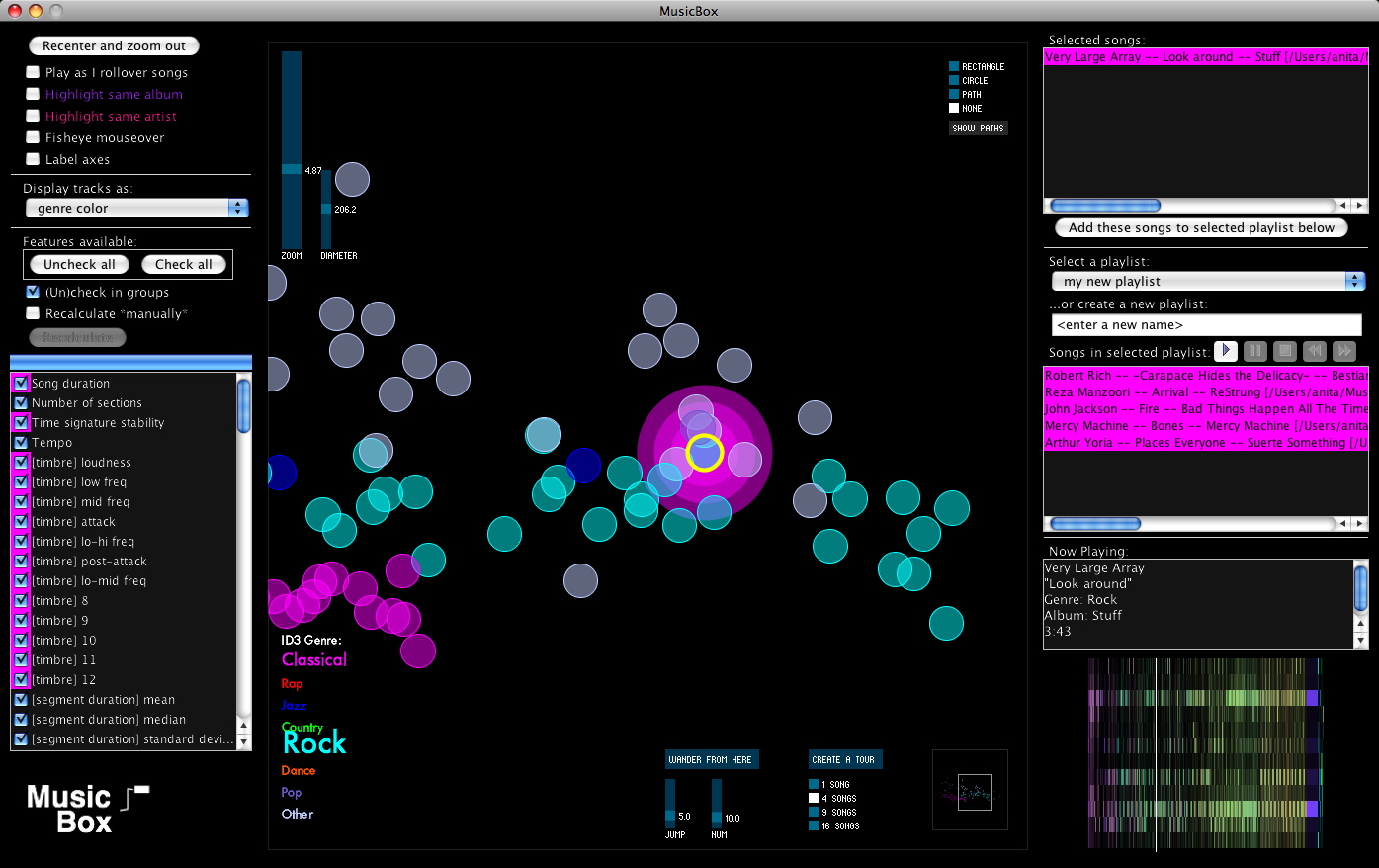

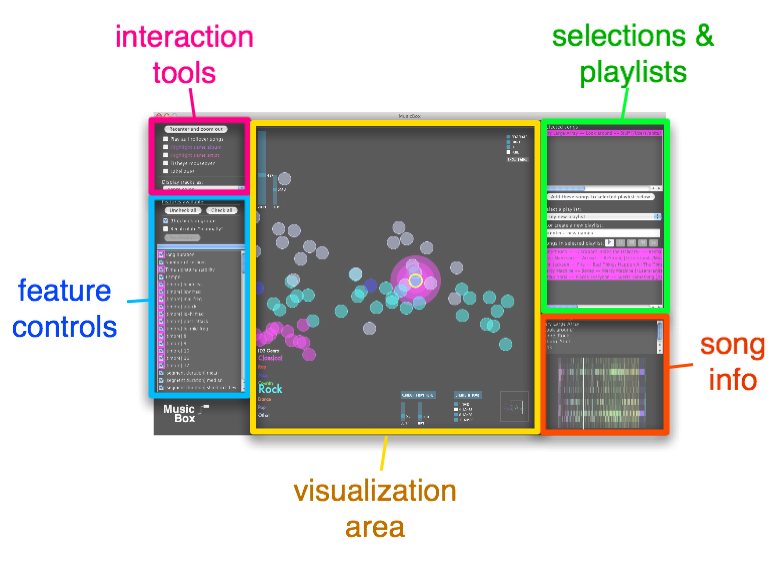

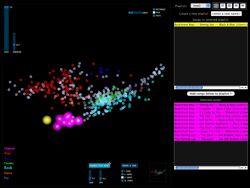

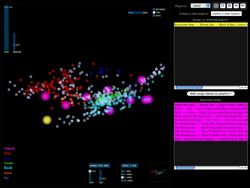

Here is what MusicBox looked like at the time my thesis was submitted:

A quick guide to the parts of this interface is displayed here:

(See my written thesis for much more detail.)

3 Comments »

Posted by Anita on June 13th, 2008 — in screenshots

I had a good meeting with Paul today. We talked about a lot of things I need to think about, but a couple of them were some simple features to add. Here are some screenshots.

Album art view

Paul says lots of people judge music based on its cover, and that viewing album art would be valuable to those kinds of people.

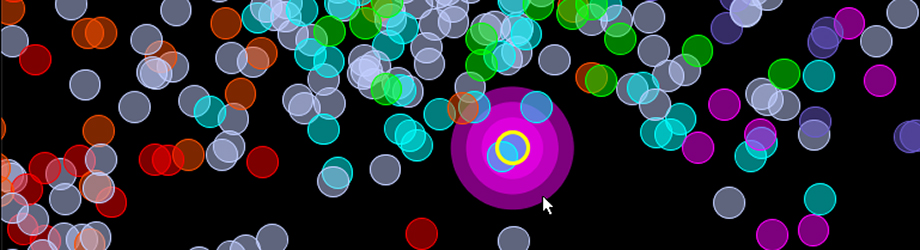

Circle-selection mode

For selecting songs within a particular distance of a given song.

1 Comment »

Posted by Anita on June 5th, 2008 — in audio features, contextual features, milestones, videos

Yesterday I set up the MusicBox to animate transitions as you enable or disable features. I took a short video of this: it starts with no features enabled, and I go through each feature (or set of features; they are enabled in blocks together, like all allmusic.com tags at once), adding it, and you can see the shape of the musical space change as those features’ influence are added and subtracted.

The program is recalculating the space after every change, by performing a new principal components analysis, and then displaying all the tracks in their new locations.

Click image to view QuickTime movie (14.2 MB).

The animation isn’t perfect, specifically because the scale can change dramatically when feature sets are changed, and I didn’t interpolate that properly (it’s too complicated given the way I’ve written my code… blech, lesson learned). But it still gives a good sense of how each song is moving throughout the changing space.

No Comments »

Posted by Anita on June 4th, 2008 — in milestones, screenshots

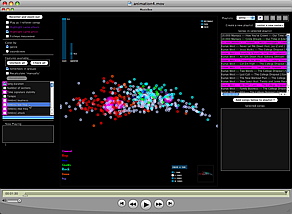

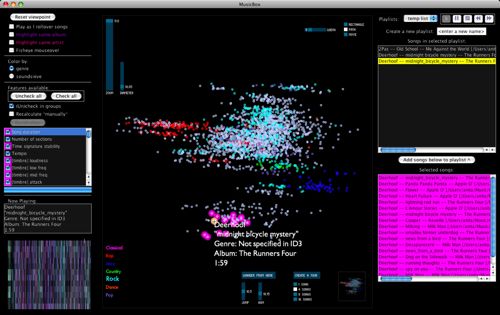

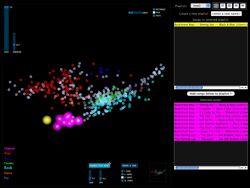

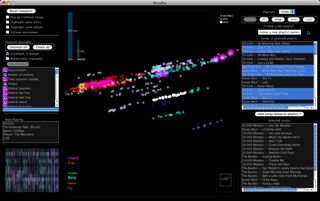

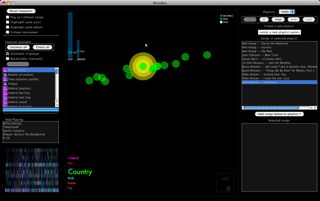

I’m trying to wrap up the main coding tasks this week. Here’s what the interface to the MusicBox software looks like today:

I’ve added a few new features, and done a fair amount of debugging, over the past 1-2 weeks. Here are screenshots of some of the new features…

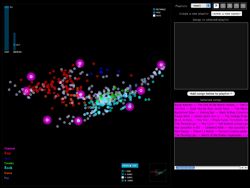

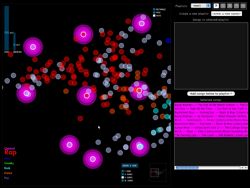

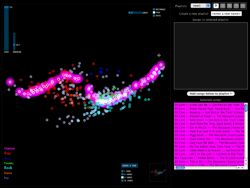

Create a tour

The “Create a tour” feature helps when you want to get a general idea of what music is sitting in front of you. You select a tour size, and the program selects that many songs evenly-dispersed through the space. With one click, you have a new playlist made up of a sampling of songs that represents the variety of music in the library.

In the screenshots, the glowing pink songs are the songs in the tour, and are automatically added to your working list of songs on the right. You can create a tour of whatever portion of the library you are currently viewing.

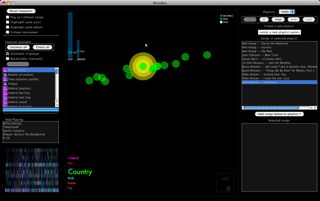

Wander from here

The “Wander from here” feature is a smart shuffle. If you like the song you are currently listening to, you can click on “Wander from here”, and the program will do a random walk through the space, starting with the current track. To choose each track, it will only venture a particular distance (that you set) from the previous track. This way, you can avoid those abrupt (and sometimes unpleasant) acoustic shifts you might experience in an iTunes shuffle.

In the screenshots, the yellow song is the current song, and the pink songs are those that have been selected for the “Wander from here” feature. The first screenshot shows a random walk with a small jump size (more smooth acoustic changes from track to track), and the next shows one with a large jump size (more dramatic changes).

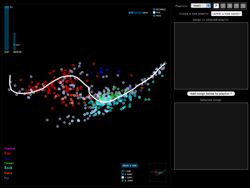

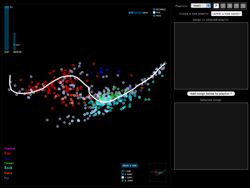

Playlists as paths

Say you want to start with your hard-hitting rap and hip-hop, and move step by step to your classical music. The playlist path function lets you do this by drawing a path through the space; it selects the songs along that path.

The first screenshot was taken while the user was drawing the path, and the next screenshot shows the resulting selection of songs along that path.

Soundsieve view

Instead of viewing each song circle colored according to the genre in the ID3 tag, you can view each song as a mini soundsieve-style image. These images summarize some acoustic characteristics of each song, like a visual fingerprint. This view is inspired by my previous project on visualizing music, soundsieve.

3 Comments »

Posted by Anita on May 23rd, 2008 — in screenshots

Amongst the new additions:

- full playlist integration

- showing genre legend with elements sized by number of occurrences

- individual song images (from soundsieve project)

No Comments »

Posted by Anita on May 20th, 2008 — in milestones, videos

I showed Paul this video (13 MB) last week, but really anyone should be able to see it. The interface is changing every day, so it doesn’t look the same as this now, but this video shows:

- general interaction: rollover play, panning, zooming, etc.

- turning features on/off and seeing effect on the space

- playlist creation: selection with rectangle or path (not quite finished when the video was made)

No Comments »

Posted by Anita on April 20th, 2008 — in audio features, contextual features, screenshots

I implemented the first set of contextual data for my test set of music. I’m grabbing moods off of allmusic.com. These are descriptors like “spooky”, “lively”, or “epic”, which are usually listed per album. I’m incorporating these features into the PCA by treating each descriptor as a separate feature, giving each song with the given descriptor a “1”, and all others a “0”. These contextual data are being added into the model that already has audio-based data.

As expected, this stratifies the whole data set, and is an interesting way of separating out the albums… those albums with the same sets of mood tags appear in a stripe across the space, and their position in the stripe comes from the audio features. If I had to guess what the audio feature separation means here, I’d say it’s distributing music on a spectrum of edgier, more bursty sounds (on the left in this visual representation) to smoother, softer sounds (on the right). I am guessing this purely from inspection.

Here are some examples:

- I see a stripe of this album: Backstreet Boys — “Black & Blue”. It moves from “Shining Star” (click to listen; you need Windows Media Player) on the edgy side, to “How Did I Fall In Love With You“ (click to listen IF YOU DARE; you need Windows Media Player) on the smooth side.

- I see a stripe of this album: The Beatles — “Sgt. Pepper’s Lonely Hearts Club Band”. It moves from “Getting Better” on the edgy side, to “Lovely Rita” in the middle, to “A Day In the Life” on the smooth side.

- I see a stripe of this album: 10,000 Maniacs — “Our Time in Eden”. It moves from “Candy Everyone Wants“ on the edgy side, to “How You’ve Grown“ on the smooth side.

Also, Busta Rhymes’ smoothest piece in the test set, “Hot Fudge”, is way less smooth than the Beatles’ edgiest piece.

No Comments »

Posted by Anita on April 15th, 2008 — in audio features, feedback

My newest problem is one that I knew I’d come across eventually: What do I do with songs that have missing data? This most recently came up when I was adapting Thomas Lidy’s rhythm feature code… It couldn’t open some of the MP3s in my test set, so I have no rhythm feature data for those songs. Lacking a better idea, I just gave them the mean values of all the other songs. But this doesn’t seem right… I can’t really give them any value. But if I don’t give them any values, the PCA can’t process these tracks anymore; I can’t put them in the space at all.

So, not sure what to do about these songs. Anyone have any suggestions?

3 Comments »

Posted by Anita on April 15th, 2008 — in audio features, screenshots

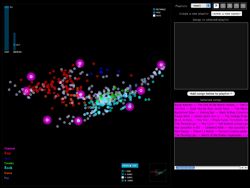

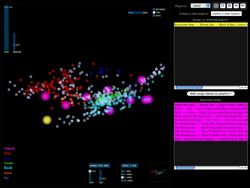

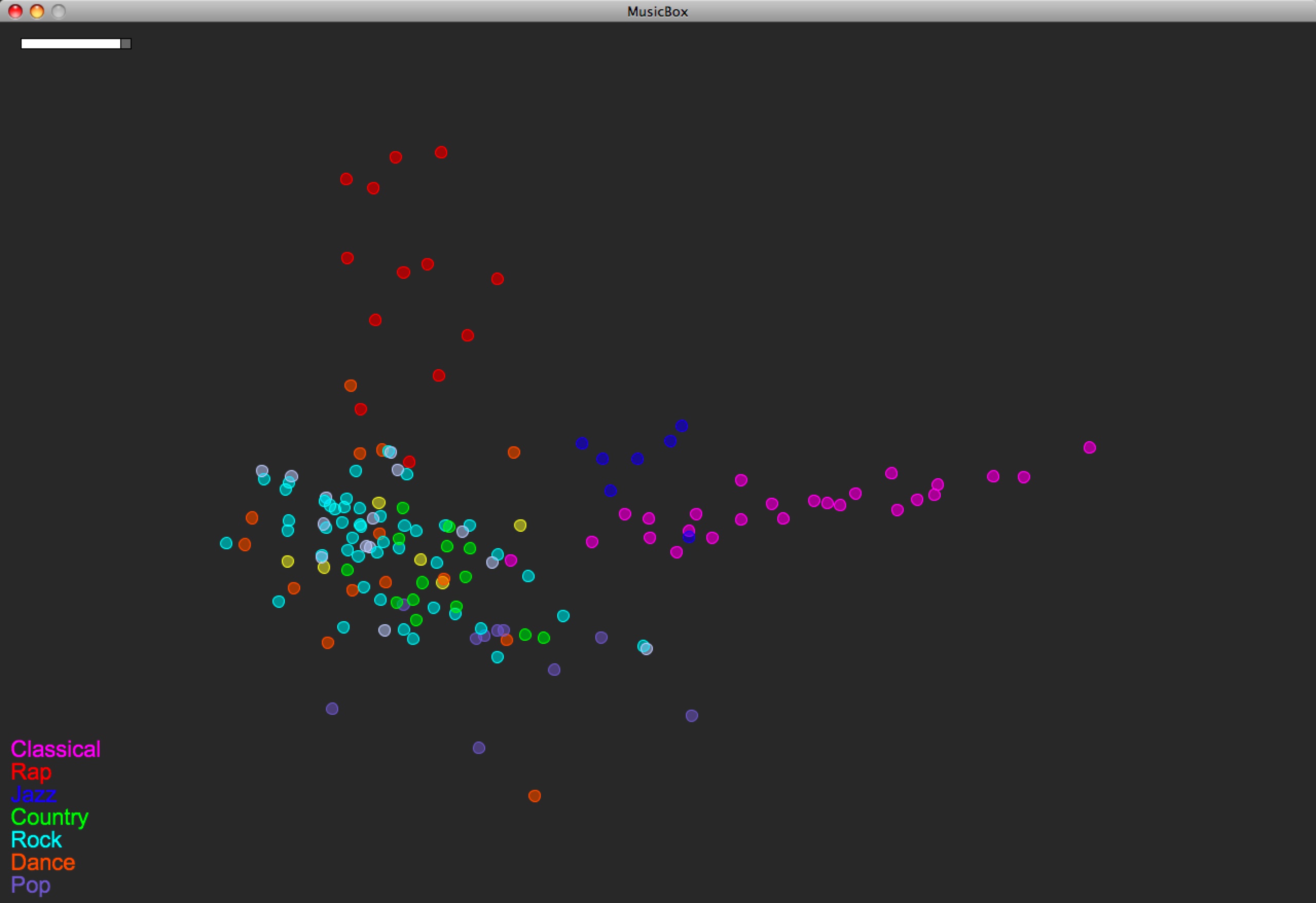

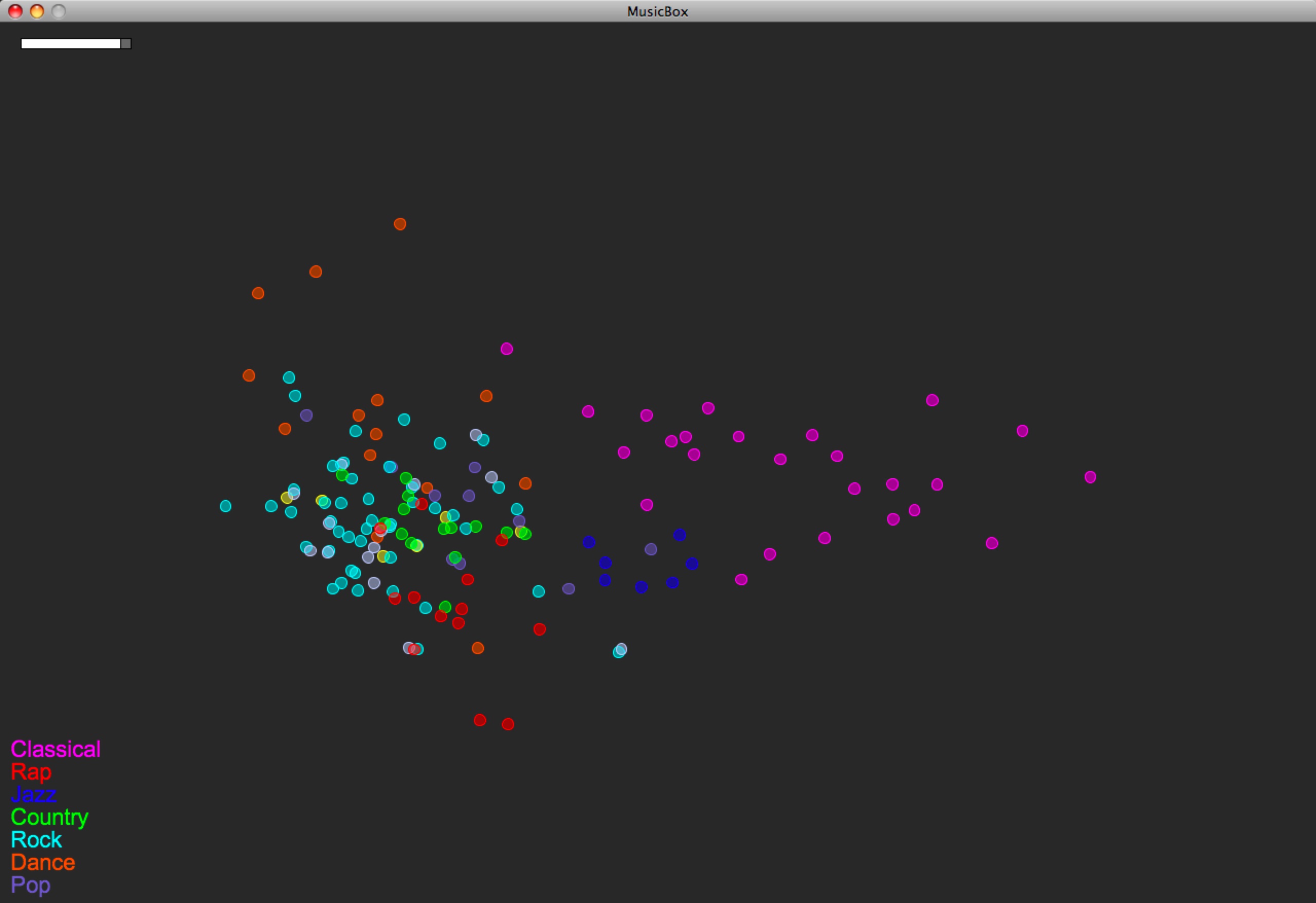

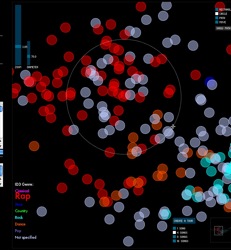

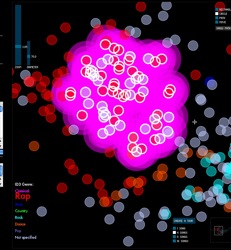

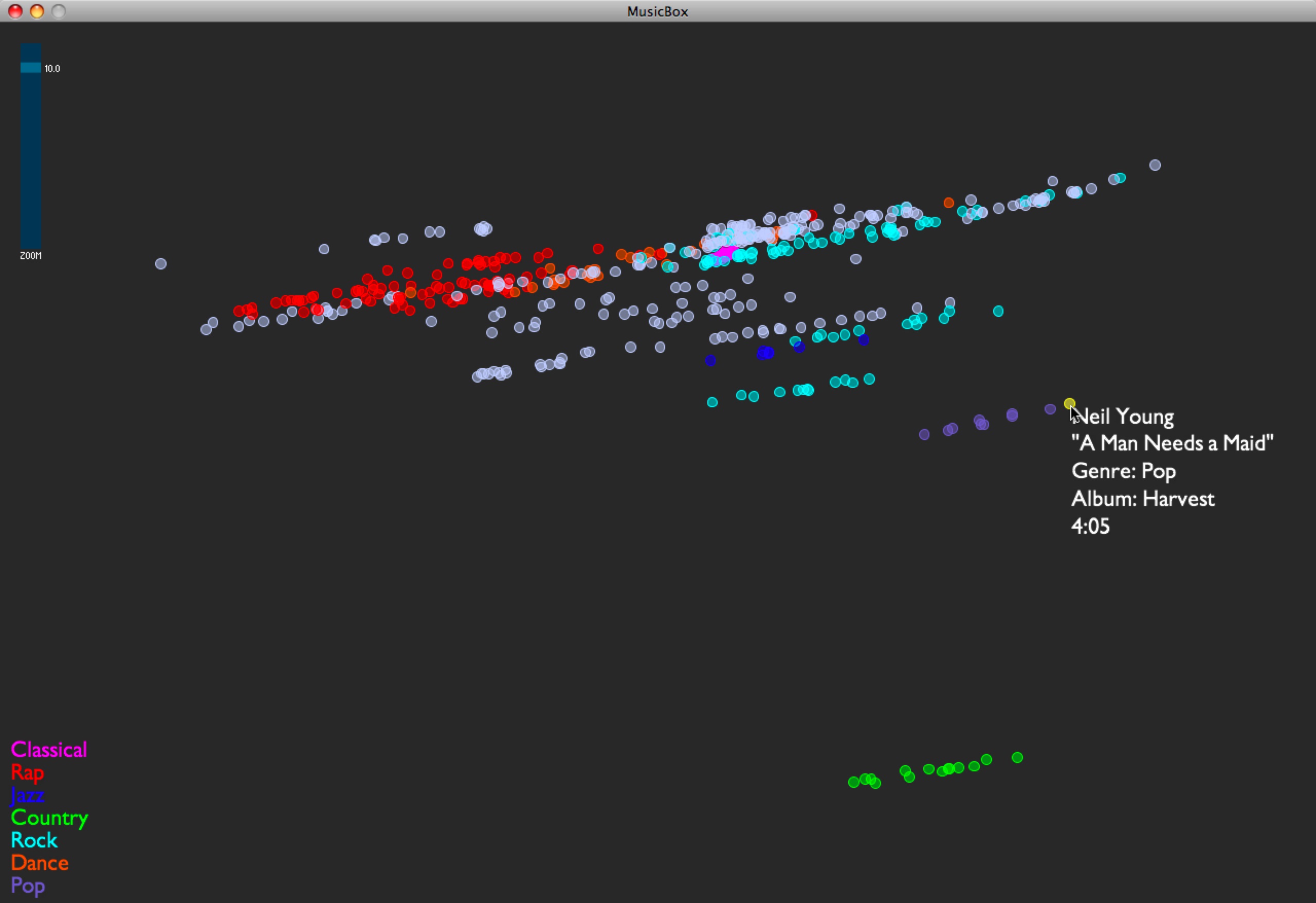

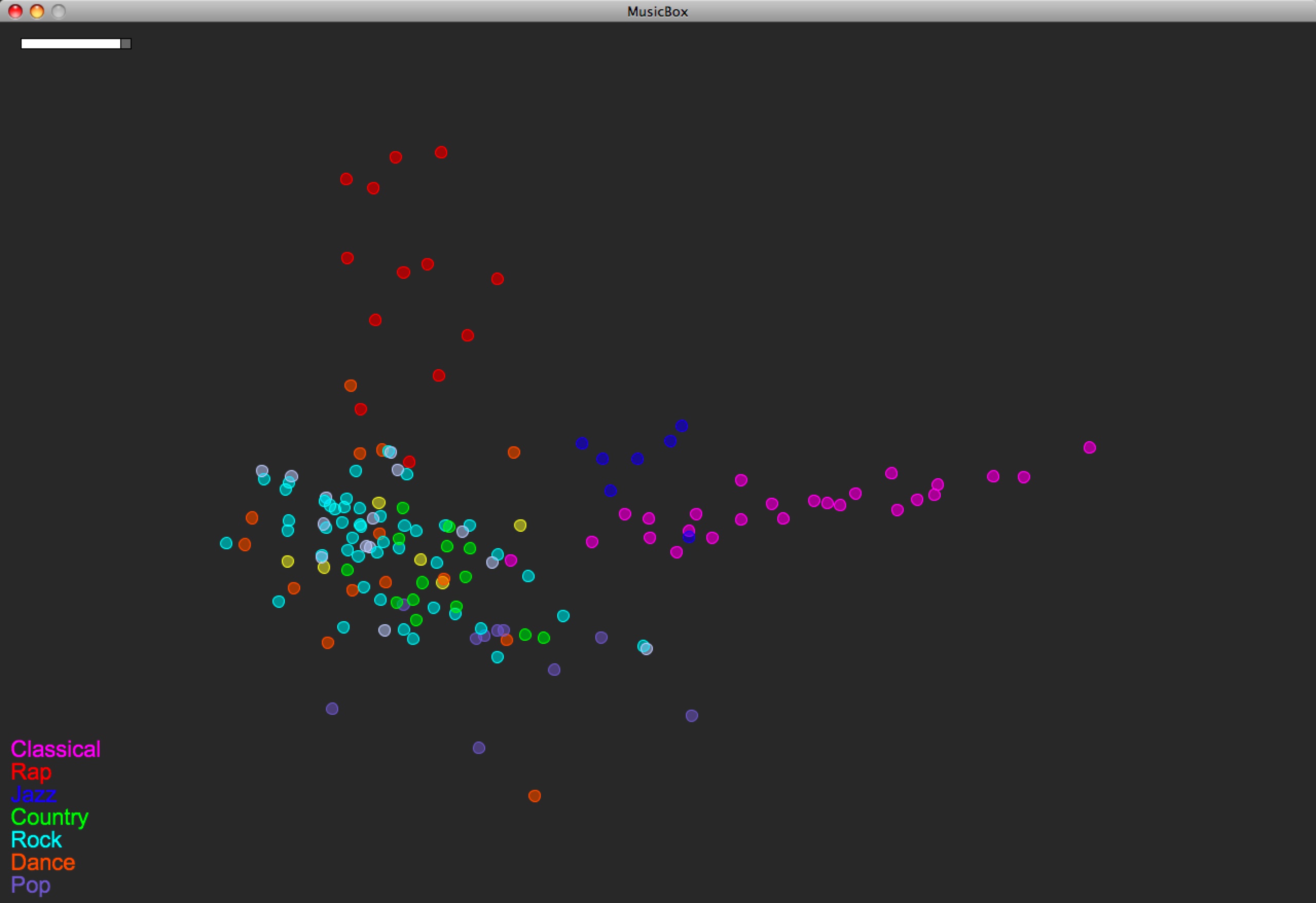

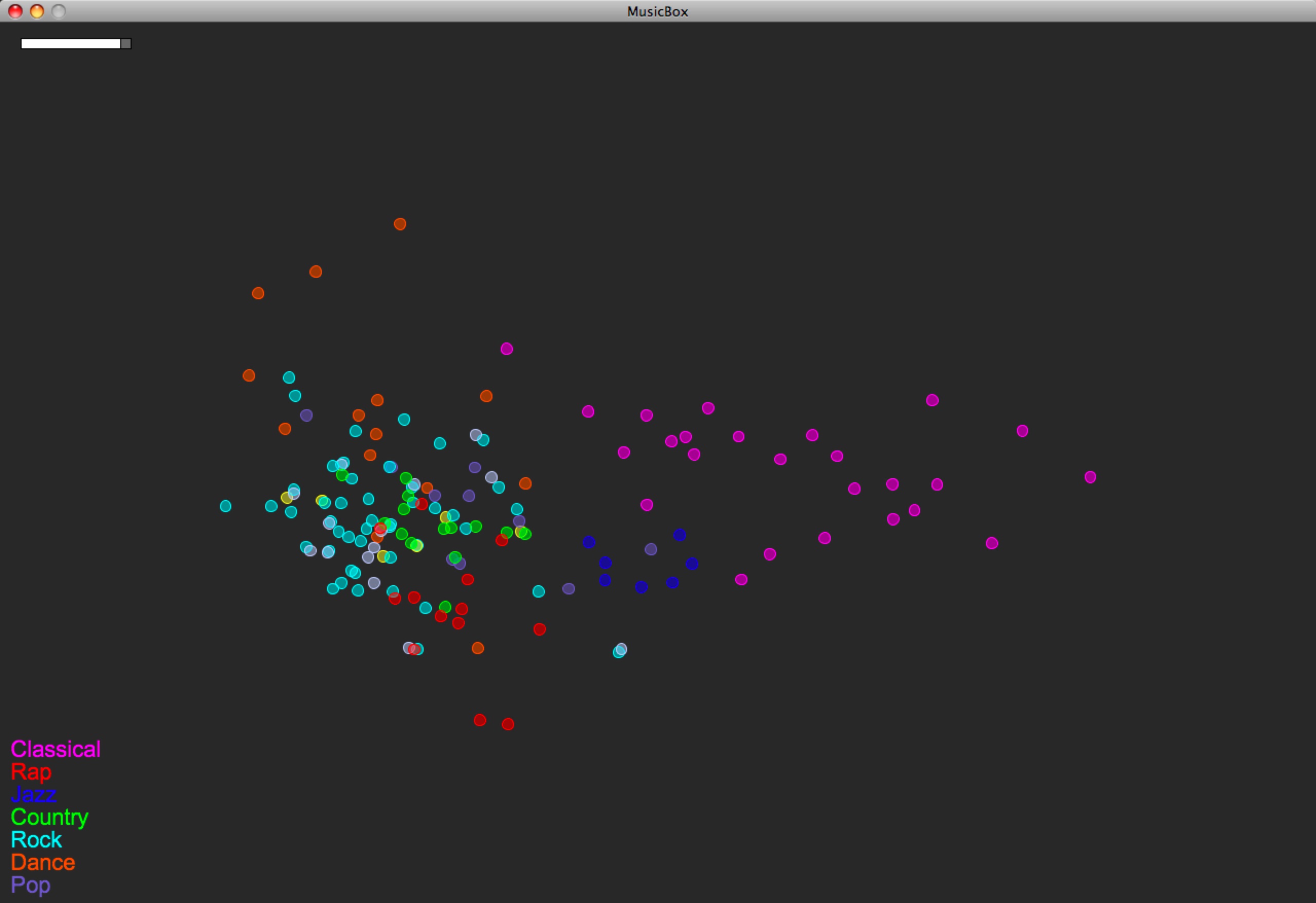

I was able to add some rhythm features to the model. I’m using Thomas Lidy and Andreas Rauber’s Rhythm Histograms (RH), summarizing each histogram (one histogram per song) with four features: mean, median, standard deviation, and (at Thomas’s recommendation) kurtosis. Here’s how the map of the same music library looks now:

You can compare with the last version, before RH features were used:

Unfortunately the Rhythm Histogram code couldn’t open my classical music, so you have to disregard that data (to which I gave the mean value of the rest of the songs’ RH data).

So the main thing that happened here is that the rap music popped out. And perhaps a wee bit the jazz.

1 Comment »